A tabletop exercise can feel like the "easy" kind of exercise: no deployments, no field gear, no logistics gauntlet. Yet many organizations still dread the part that comes after — the after-action report (AAR). Not because the writing is inherently hard, but because the exercise didn't generate clean, defensible evidence in the first place.

If you want AAR success, the highest-leverage move is to run tabletop exercises that are designed for evaluation and documentation from the start. The good news: you do not need a bigger team or more hours. You need tighter intent, better capture, and a workflow that turns decisions into findings.

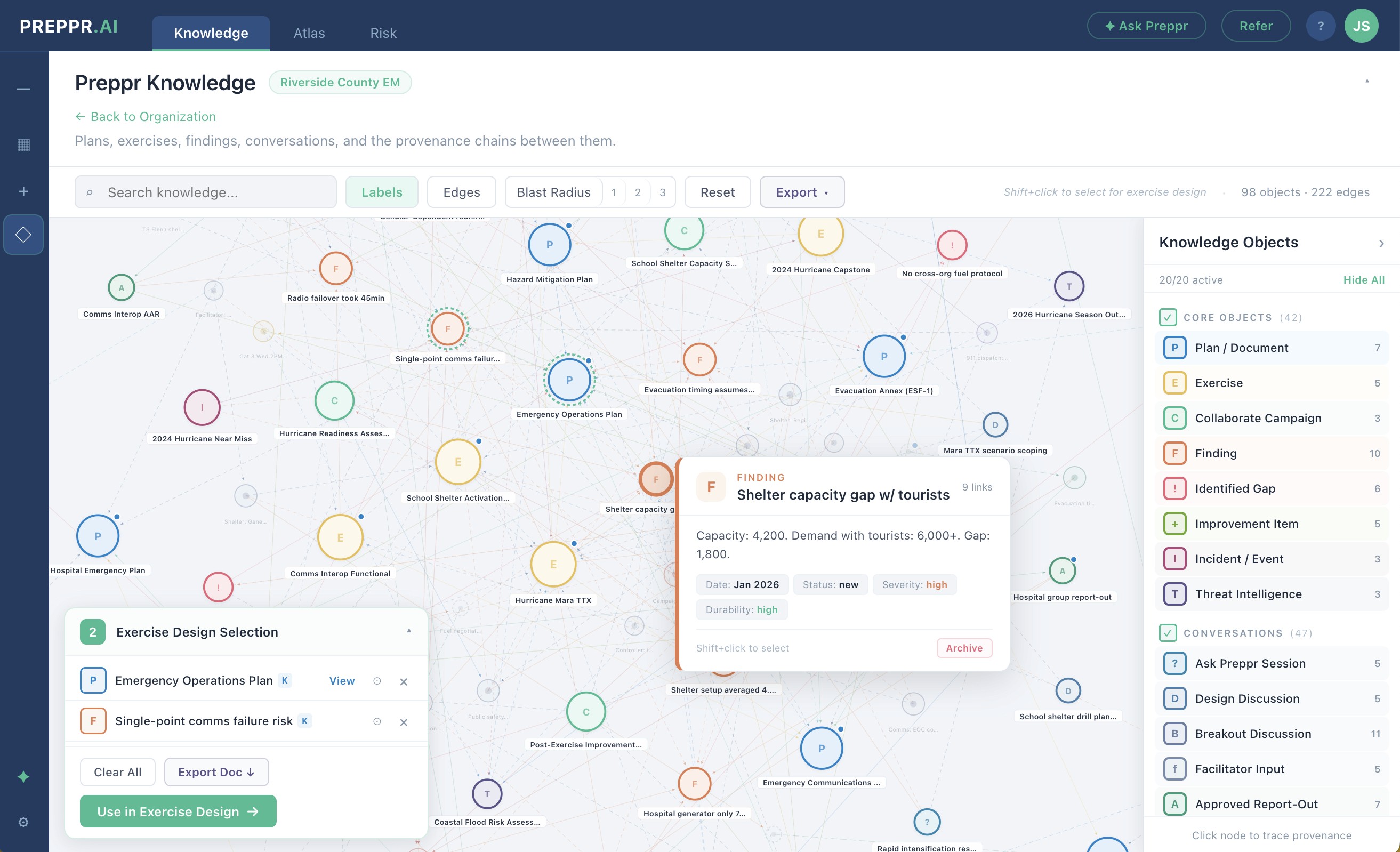

This guide covers both the manual discipline behind high-quality exercise documentation and how purpose-built platforms like Preppr can compress the timeline across every phase — from design to delivery to the final AAR/IP.

Why AARs drag (and why they disappoint)

Most slow AAR cycles trace back to a few predictable failure modes:

Objectives are too broad ("test communications") or too many, so notes sprawl and analysis becomes subjective.

No evaluation plan exists beyond "take notes," so the team cannot distinguish a meaningful gap from a normal discussion tangent.

Decisions are not time-stamped or attributed, which forces the author to reconstruct the story later from memory.

Key artifacts are missing (situation updates, draft messages, resource requests, policy calls), leaving the AAR heavy on opinions and light on evidence.

Corrective actions are vague ("improve coordination"), which makes the AAR easy to finalize but useless to implement.

FEMA's Homeland Security Exercise and Evaluation Program (HSEEP) has emphasized this for years: evaluation and improvement planning are not an afterthought — they are part of the exercise lifecycle. If your organization uses HSEEP (or borrows its logic), your tabletop exercise should be engineered to produce an AAR/IP with minimal friction.

The root problem is that most organizations treat exercise design, exercise delivery, and exercise documentation as three separate projects. They are not. They are one continuous workflow, and the quality of the final AAR is determined almost entirely by decisions made in the first two phases.

Phase 1: Design for documentation from the start

Write fewer, sharper objectives — and define what "good" looks like

Strong objectives are specific enough to evaluate and narrow enough to capture. A practical target for most tabletop exercises is 3 to 5 objectives.

Instead of writing objectives that describe topics, write objectives that describe observable performance.

Example shift:

Topic-style: "Discuss information sharing."

Performance-style: "Demonstrate how the organization validates, approves, and disseminates public information within X minutes across identified channels, including accessibility and language considerations."

You do not need to force numeric targets into every objective, but you do need observable criteria. Otherwise, your AAR becomes a debate.

Decide what evidence you will accept

Before the exercise, define what counts as evidence for each objective. Evidence can be:

A decision ("We will activate the JIC at 0900, led by…")

An artifact (draft warning message, ICS 214 entry, resource request)

A demonstrated process (who approves what, what tool is used, where it is documented)

A simple mapping prevents "blank page AAR syndrome":

Objective | Evidence to capture | Primary capture method | Owner |

|---|---|---|---|

Public information approval and release | Time of decision, approver, channels, draft message text, accessibility checks | Time-stamped decision log + artifact collection | Scribe + PIO evaluator |

Resource prioritization | Decision criteria, competing demands, unmet needs, mutual aid triggers | Decision log + evaluator notes tied to objective | Operations evaluator |

Cyber incident coordination | Escalation path, containment decisions, legal/privacy constraints, vendor contact | Decision log + artifact capture | IT/security evaluator |

Build an evaluation structure that matches your reality

HSEEP provides a formal structure (capabilities, critical tasks, performance measures). Some organizations adopt it fully. Others adapt it.

Either way, pick a structure your team will actually use — capability-based, function-based, or risk-based. The best structure is the one that lets evaluators tag observations consistently, so analysis writes itself later.

Build your injects around decision points, not discussion topics

The most common tabletop design failure is structuring modules around topics instead of forcing decisions. "Discuss your communications approach" produces meandering conversation. "You have 47 confirmed measles cases in three schools, regional hospital is at 95% capacity, and your public health director is asking whether to activate the JIC — what do you do?" produces a decision.

Design each inject to force a specific, evaluable choice:

"Do you activate? At what level? Who has authority?"

"What is your public message, and who approves it?"

"What mutual aid triggers apply, and what information is required?"

"What do you stop doing to free capacity?"

When your scenario is structured this way, your AAR analysis becomes straightforward because you have comparable decision moments across exercises.

How Preppr accelerates exercise design

Exercise design is where most organizations spend the most time and where the most institutional knowledge gets lost. Preppr's Exercise Designer addresses this directly.

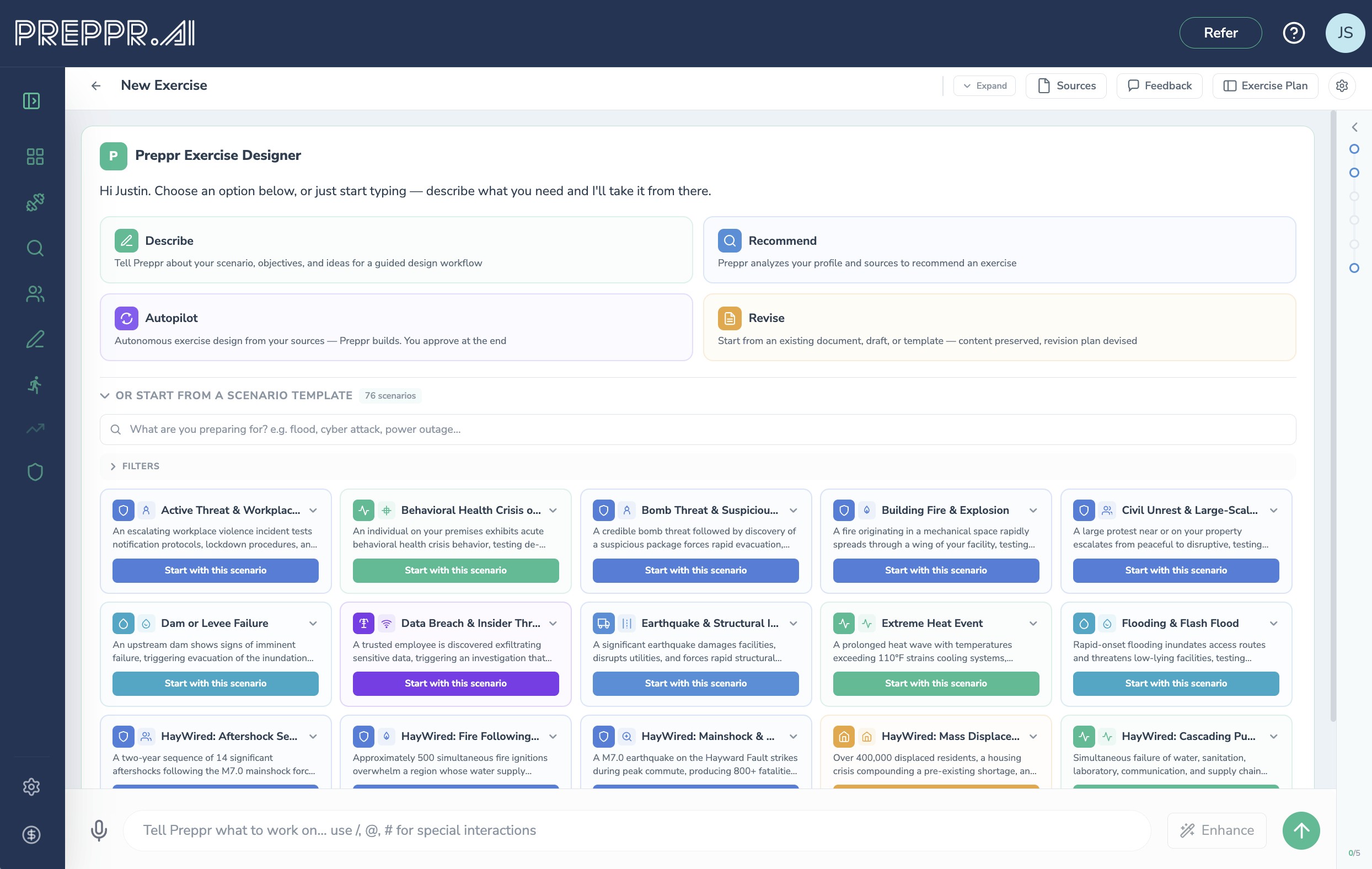

The platform offers four design entry modes — Describe, Recommend, Autopilot, and Revise — so you can generate a complete exercise framework in minutes rather than days, starting from whatever level of detail you have. A library of 76+ scenario starter cards grounded in real incidents gives planners a defensible, doctrine-aligned foundation rather than a blank page.

Revise mode deserves particular attention for organizations that already have existing exercise materials. It accepts SITMANs filled with redlines and reviewer comments, adjudicates the feedback, preserves existing content, and maps downstream dependencies before presenting a proposed change plan. After human approval, Preppr updates the exercise and produces new documentation along with a crosswalk that records every change accepted, rejected, and the reasoning behind each decision — creating an auditable revision history that most organizations currently manage through email threads and tracked-changes documents.

When you start an exercise design, Preppr Intelligence automatically pulls real-time threat intelligence from open sources — current incident data, active advisories, recent events matching your hazard type and geography. This context flows directly into scenario generation, grounding your narrative in what's actually happening rather than generic templates. The result is a scenario that feels current, locally relevant, and professionally informed without requiring the planner to manually research and synthesize threat data before they can start designing.

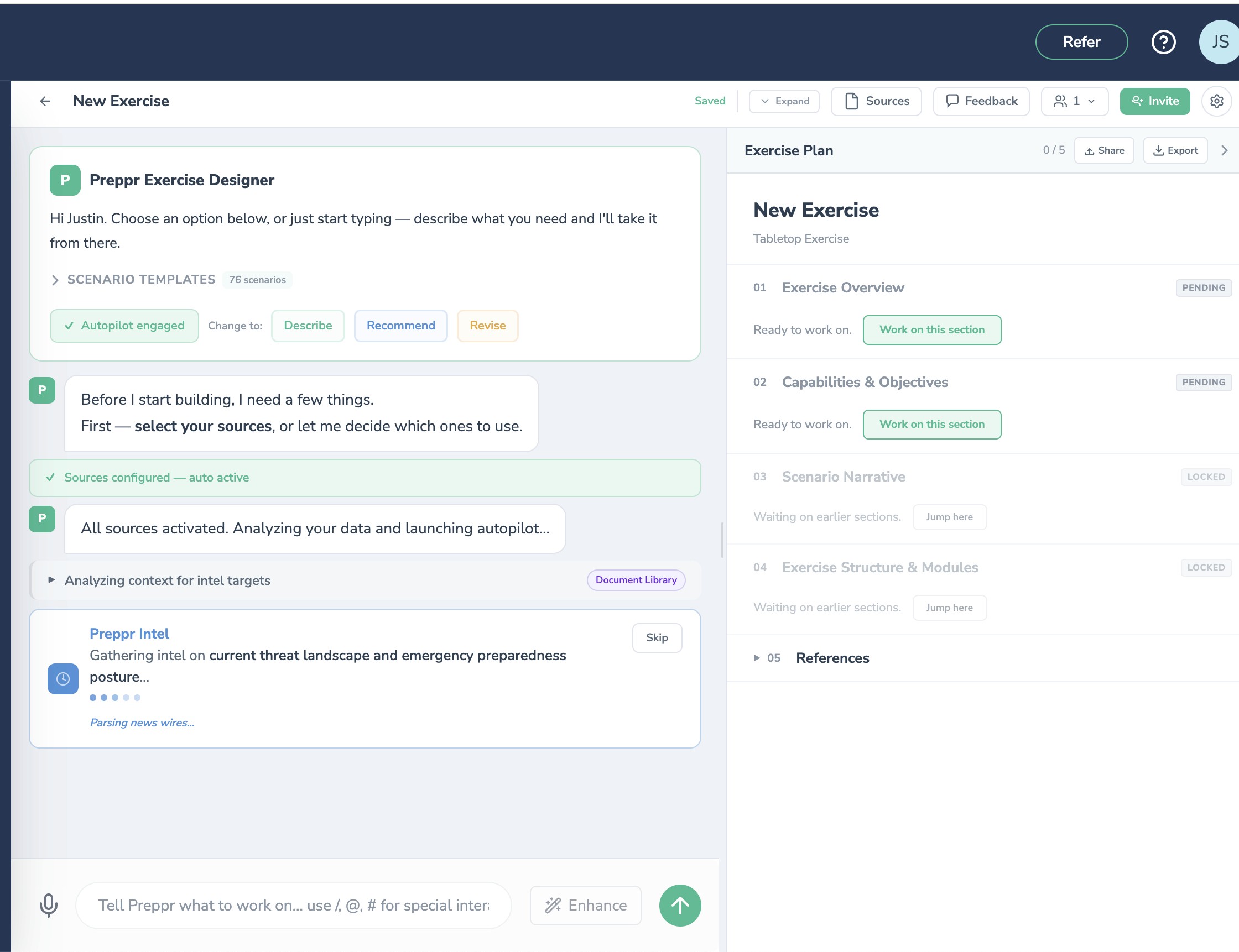

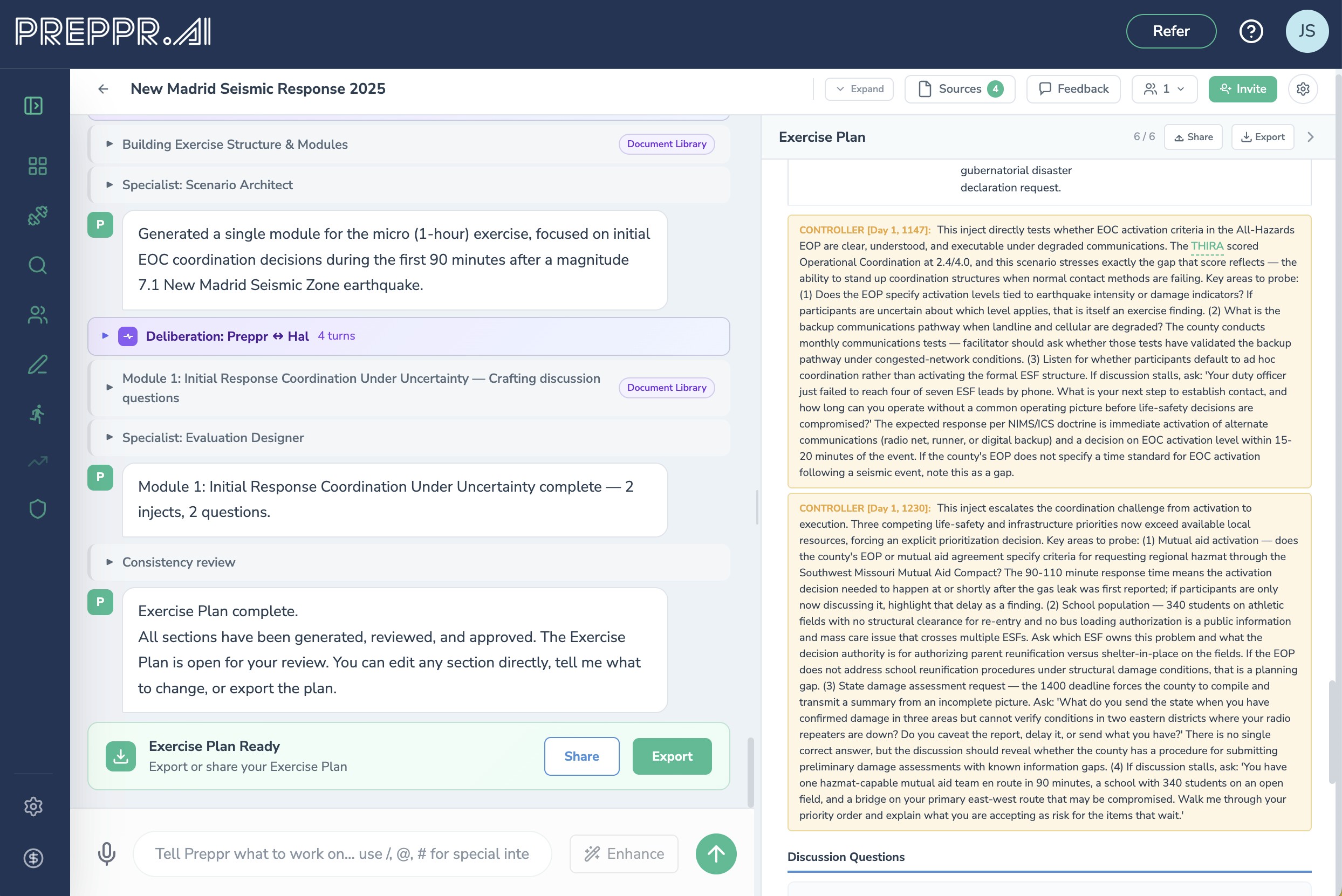

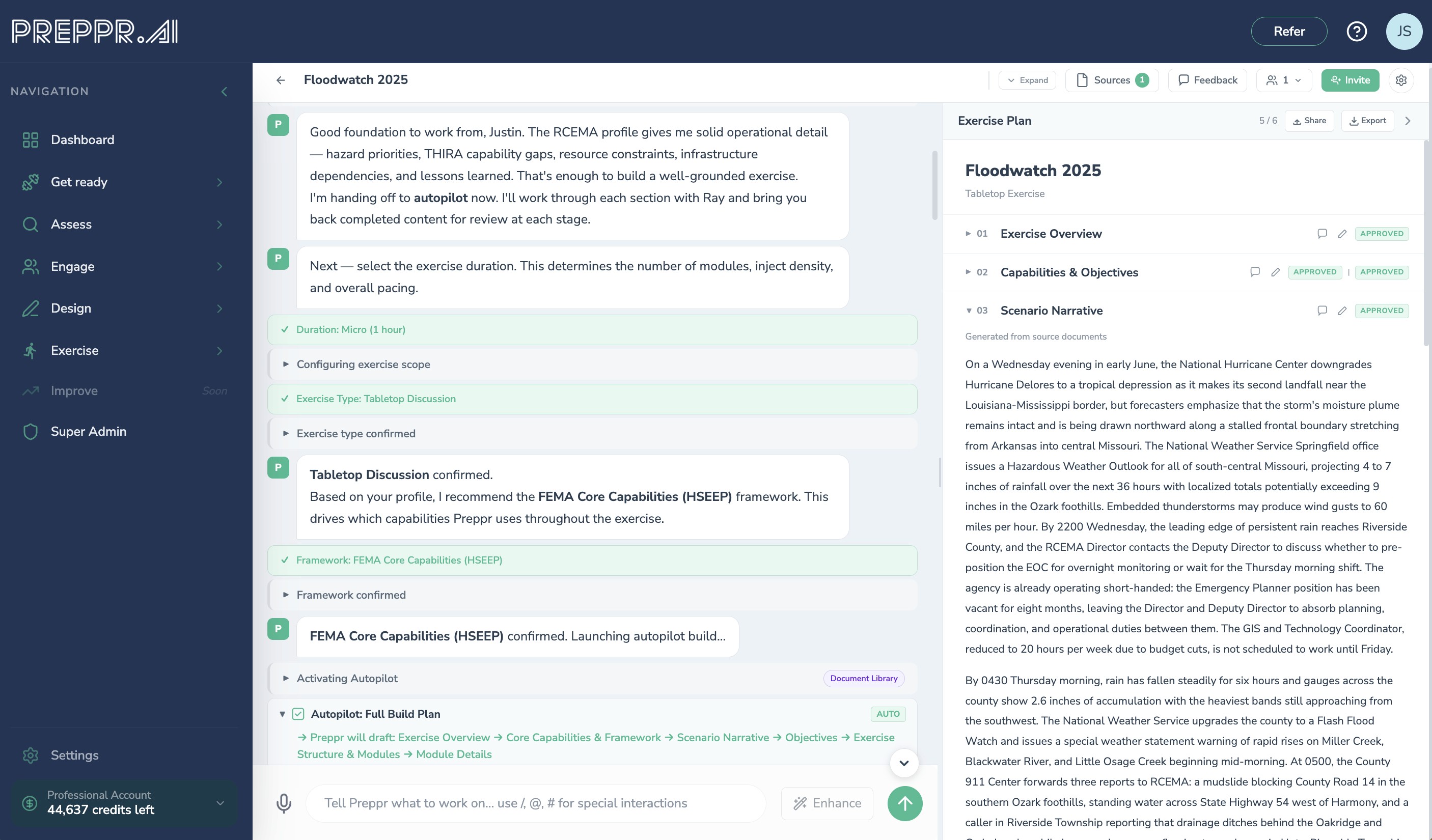

Autopilot is Preppr's fully automated exercise design mode. Point it at your source documents — threat assessments, after-action reports, THIRAs, planning documents — and walk away. Autopilot analyzes your materials, infers the right hazard, audience, capabilities, and objectives, and presents a Discovery Summary for your review before it builds. Once you confirm, it runs the complete generation pipeline end-to-end: scenario narrative, objectives, modules, injects, discussion questions, controller notes, and MSEL. Come back to a complete, review-ready exercise plan. From there, you revise what needs refinement and approve what doesn't.

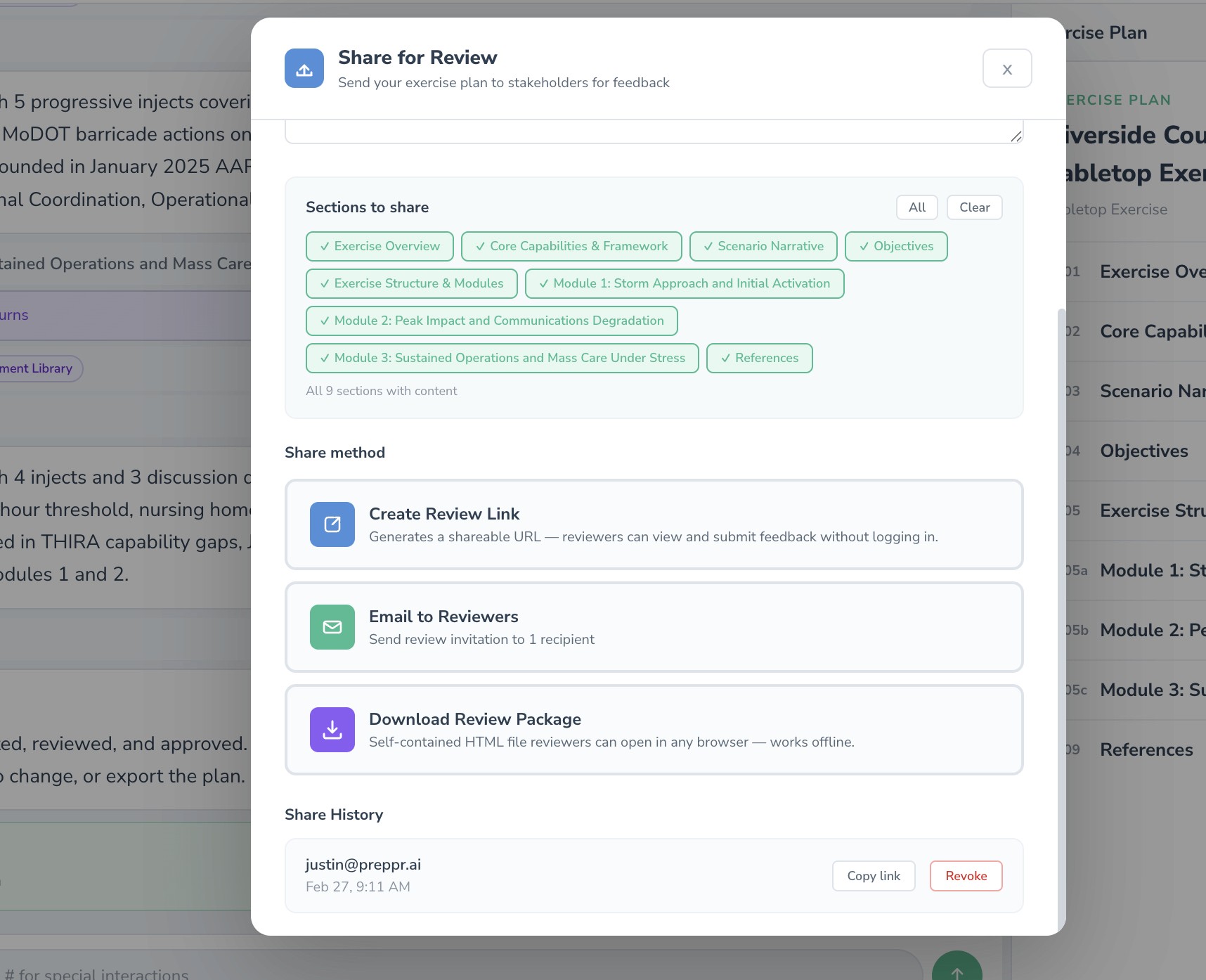

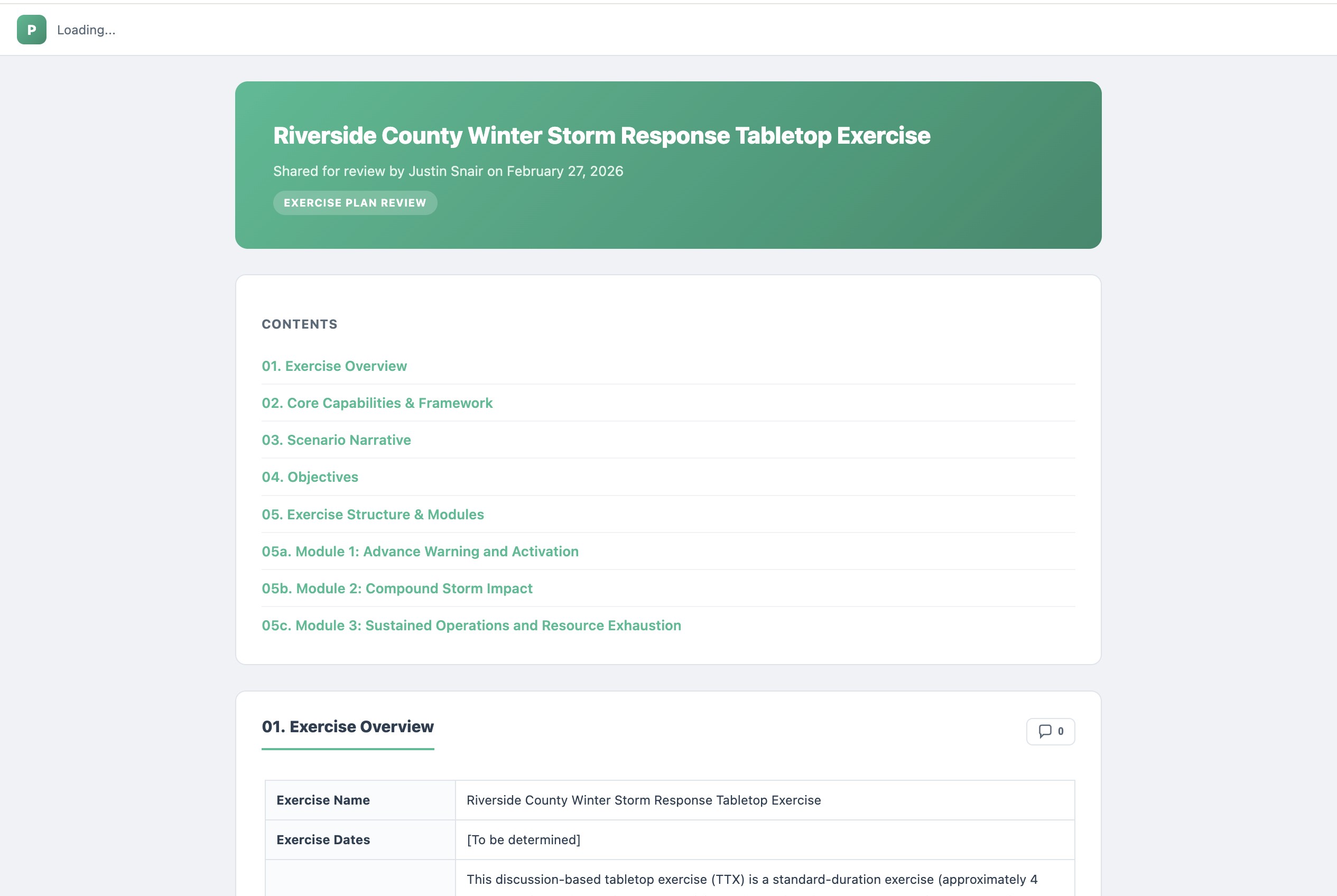

The external review workflow extends this across stakeholders. In-progress or final drafts can be sent to reviewers who can edit, comment, and submit feedback directly.

Preppr receives the submissions, analyzes them, generates a consolidation plan, and — with your approval — updates the content and produces the crosswalk. What typically takes weeks of reconciliation across multiple reviewers becomes a structured, documented process.

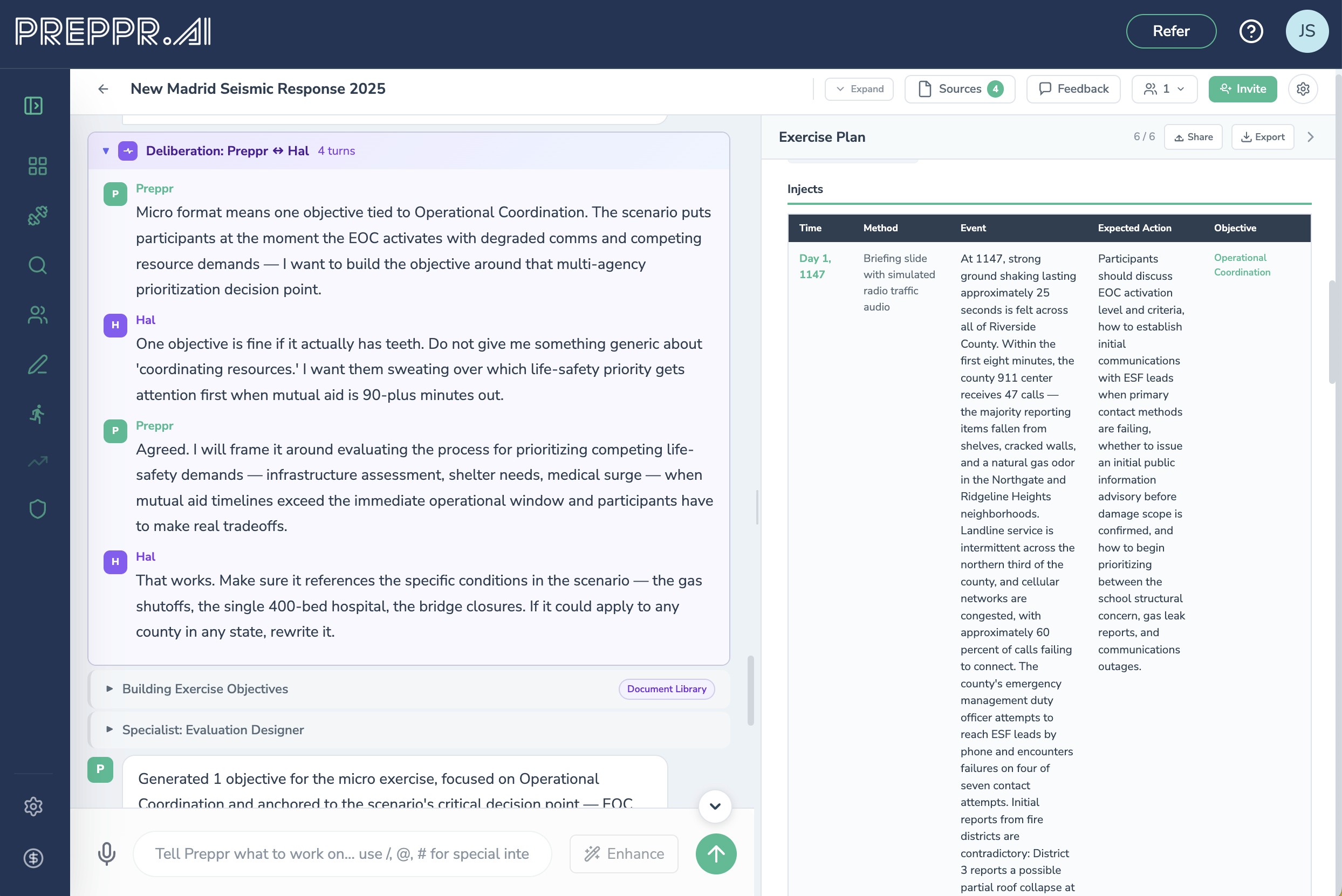

More broadly, Preppr structures every exercise around HSEEP-aligned objectives, functional groups, and capability frameworks from the start. The inject sequence is built to force evaluable decisions — not just discussion topics. Because the design layer is built for documentation, the evaluation structure is already in place before the first participant logs in.

Preppr also maps exercises to FEMA Community Lifelines and FEMA Core Capabilities, ensuring that every tabletop generates findings that connect to the planning frameworks your leadership and funders expect to see.

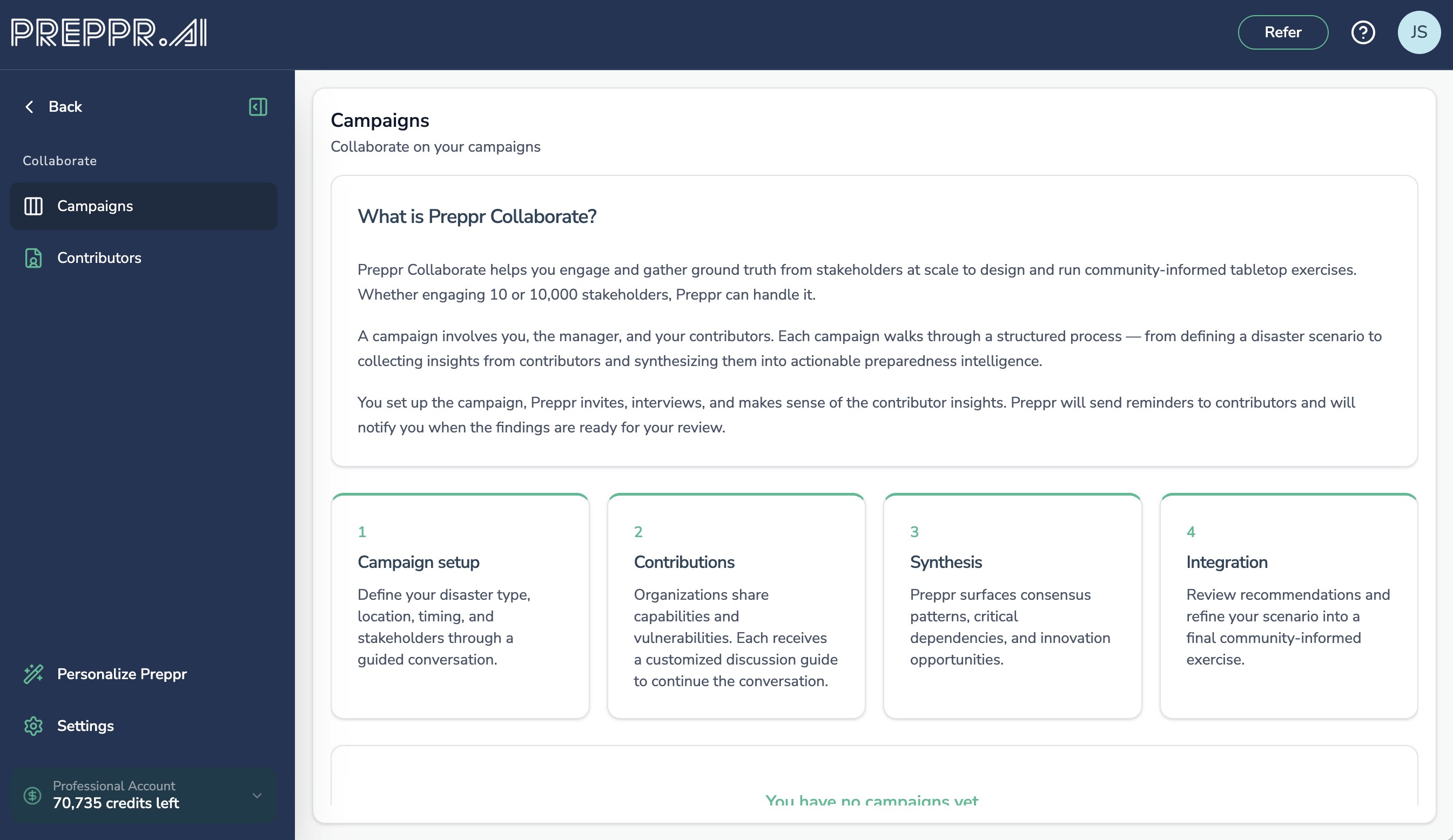

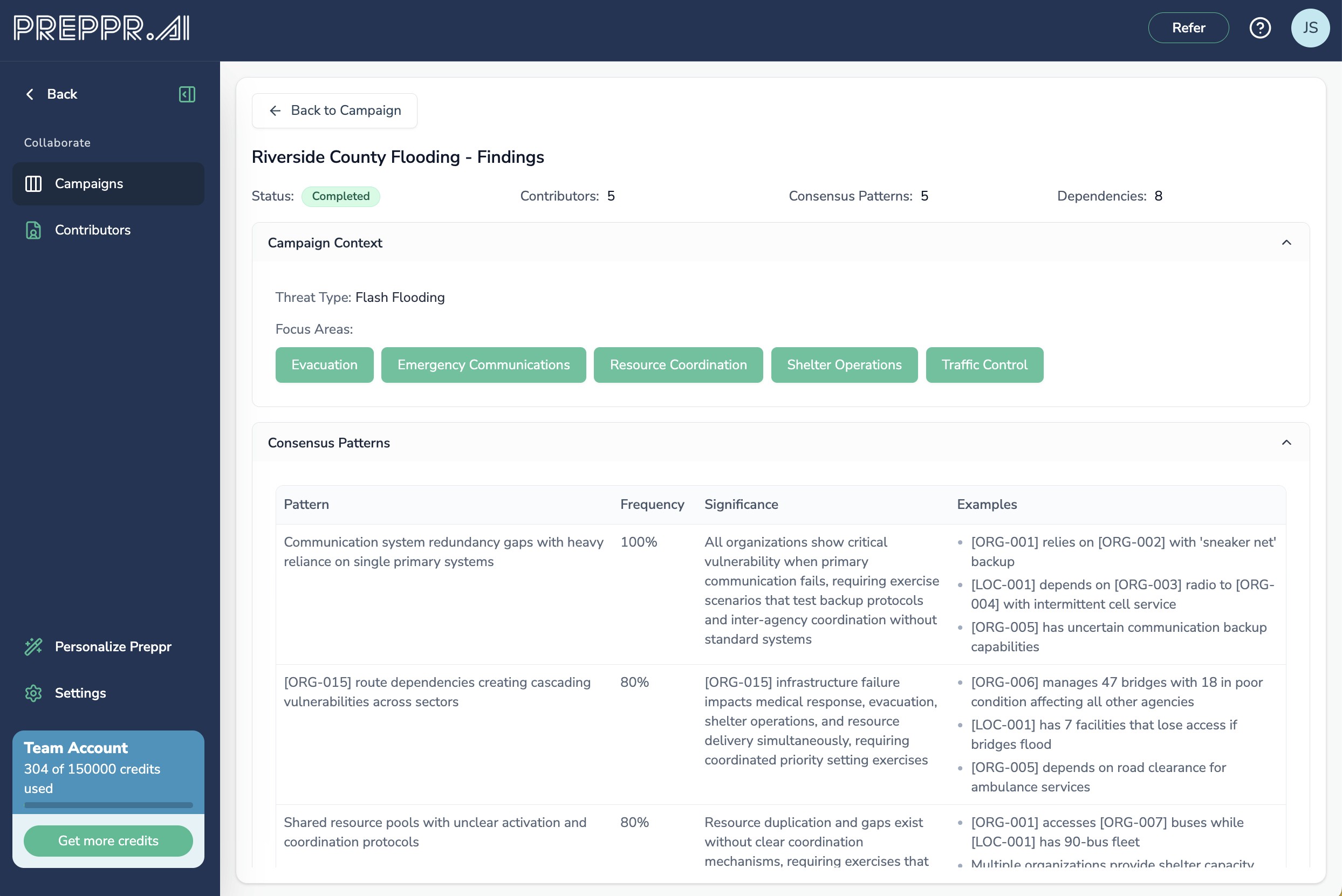

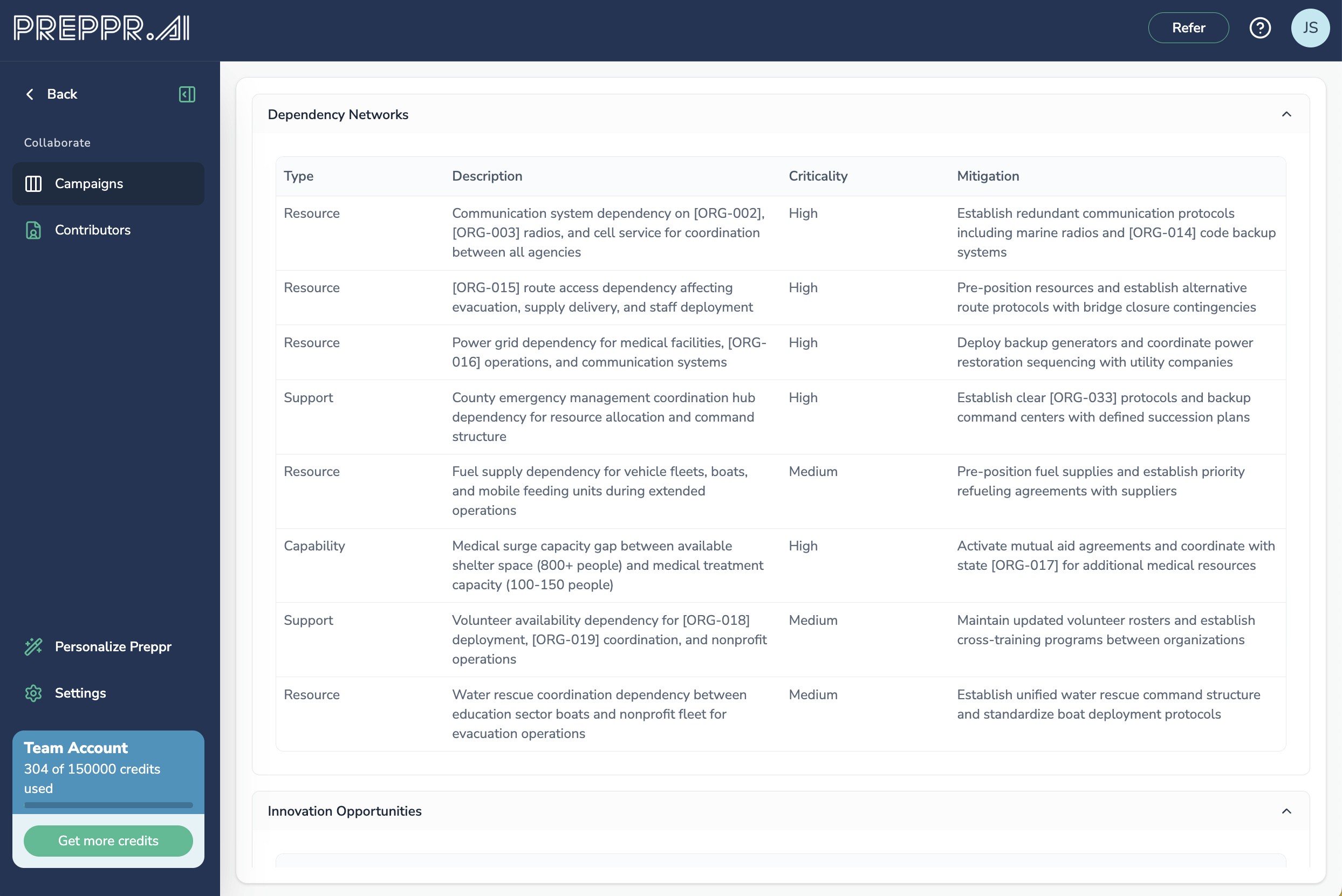

For organizations that want to ground their exercise design in genuine stakeholder intelligence rather than a single planner's assumptions, Preppr Collaborate provides the foundation. Through a structured four-phase workflow — Campaign Setup, Contributions, Synthesis, and Integration — Collaborate aggregates input from anywhere between a handful of stakeholders and a community-wide engagement of thousands. Organizations contribute their capabilities, vulnerabilities, and operational context through guided conversations. The synthesis engine identifies consensus patterns, critical dependencies, and gaps across all submissions, then generates draft inject recommendations that feed directly into exercise design. The result is an exercise grounded in what your stakeholders actually know about their own organizations — not a scenario built in isolation.

Phase 2: Deliver the exercise to generate usable findings

Staff for documentation, not just facilitation

AAR speed improves dramatically when roles are clear and resourced. Facilitator, evaluator, and scribe are different jobs — and many tabletop exercises overload one person with all three.

At minimum:

Facilitator keeps pace, enforces scope, and drives decision points.

Scribe runs a time-stamped decision log and captures key artifacts.

Evaluator(s) focus on specific objectives and record observations in a structured way.

If you are short-staffed, protect the scribe role. A strong scribe is often the difference between a two-day AAR and a two-week AAR.

Use a decision log as the backbone

AARs are easiest to write when you can reconstruct the exercise as a sequence of decision points. A practical decision log captures:

Time (real or simulated)

Decision required

Options considered

Decision made and owner

Constraints and assumptions

Follow-up actions created

This becomes your narrative — and it becomes your improvement plan seed.

Set ground rules that support clean evaluation

A few ground rules improve both psychological safety and documentation quality:

No-fault learning environment: focus on systems and decisions, not personal performance.

Assumptions are allowed, but must be stated: the scribe captures them, because assumptions often become AAR findings.

When a decision is made, it is logged: the facilitator pauses briefly to confirm wording.

This does not slow the room if you do it consistently.

Use structured capture buckets

A simple rule: every observation should land in one of a few categories.

Capture bucket | What it is | Why it speeds the AAR |

|---|---|---|

Decision | A choice made, by someone, at a time | Becomes the narrative backbone |

Observation | What was done well or poorly, tied to an objective | Turns into strengths and areas for improvement |

Gap | A missing process, unclear authority, broken dependency | Becomes a finding with a clear "what failed" |

Root cause hint | Why the gap exists (training, policy, tools, staffing) | Prevents shallow corrective actions |

Action item | A proposed fix with an owner concept | Seeds the improvement plan immediately |

If evaluators tag notes this way while the exercise runs, your AAR writing becomes compilation and editing, not interpretation.

Capture artifacts in the moment (even if they are imperfect)

Artifacts are the fastest path to a defensible AAR. In a tabletop exercise, artifacts can be drafts and placeholders:

A draft public message or internal notification

A screenshot of the situation status format participants agree to use

A filled-in resource request template

A call-down list with corrections marked

If your organization has templates, use them during the exercise. If you do not, this is your signal to create lightweight templates as part of improvement planning.

Separate parking lot issues from evaluable findings

Tabletop exercises often surface important but non-evaluable issues (budget constraints, long-term staffing shortages, vendor dissatisfaction). Capture them, but do not let them clog the AAR. Use a parking lot log with two fields: "topic" and "next step owner."

How Preppr facilitates and captures in real time

This is where Preppr's end-to-end delivery capability changes the calculus for under-resourced teams.

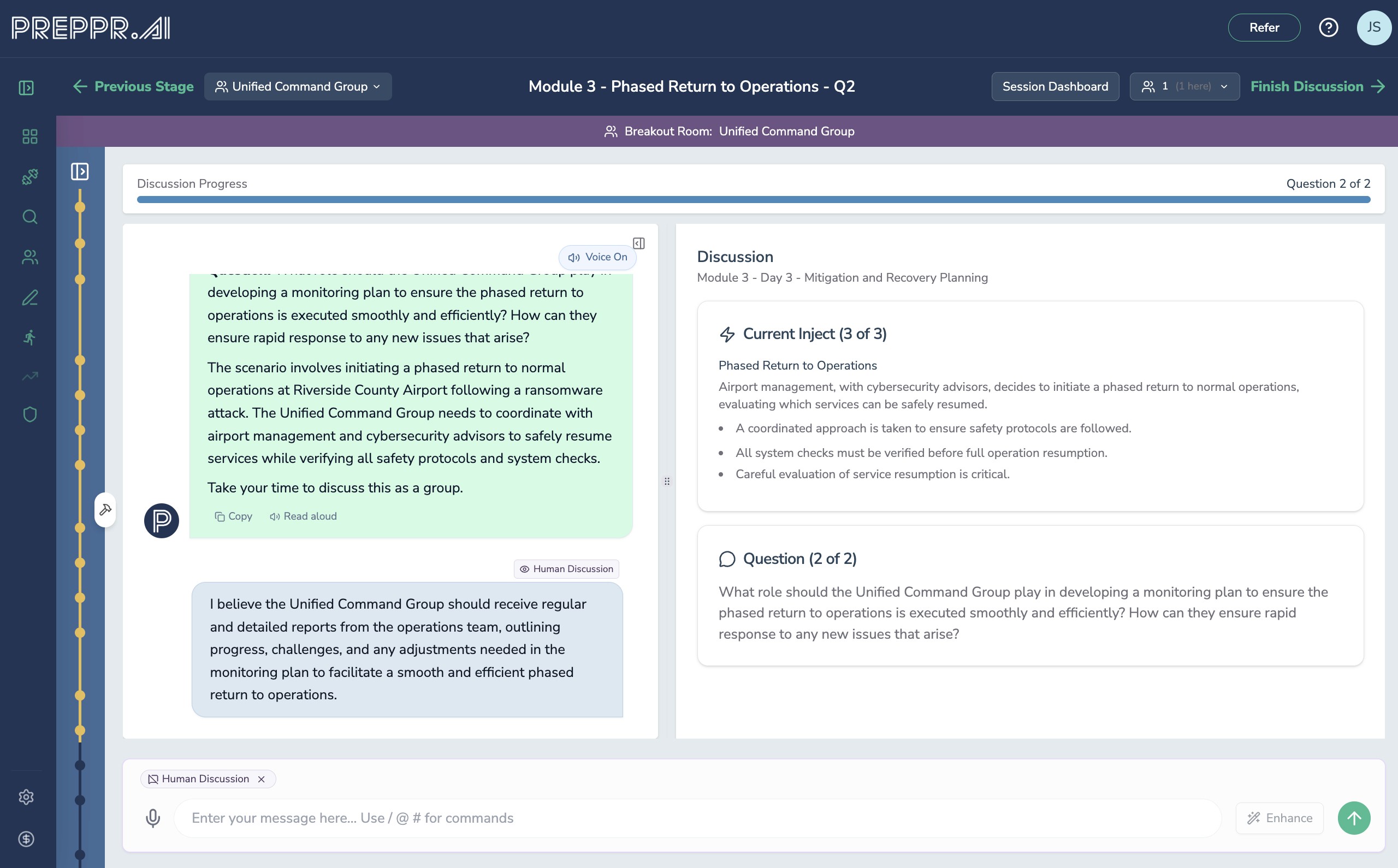

Preppr Exercise guides participants through every phase of exercise delivery — Welcome and check-in, Exercise Overview, Scenario Presentation, Module Discussion, Group Report-Out, Cross-Group Synthesis, Final Report, Hotwash, and Improvement Planning — with purpose-built prompting for each phase. The facilitator doesn't need to hold all of this in their head or manage a complex runsheet; Preppr handles the structure.

During module discussions, Preppr captures a response synthesis for every question — a 2-4 sentence approved summary of what the group decided, validated by participants before moving forward. This is the documentation artifact that makes post-exercise report writing trivial: the "final answer" to every question is already written, reviewed, and attributed.

Preppr also tracks document intelligence during discussions. When uploaded plans are available, the system identifies gaps between what participants say they would do and what their documented procedures actually specify — providing the kind of evidence-based findings ("Group stated they would use phone calls to request mutual aid, unaware that the Regional Mutual Aid Agreement Section 2.3 requires WebEOC-based requests with 4-hour response commitments") that practitioners normally reconstruct hours after the exercise ends.

Multi-group exercises benefit from Preppr's cross-group synthesis capability, which surfaces coordination gaps and conflicting assumptions across functional groups — the systemic findings that single-group documentation almost always misses.

Throughout delivery, the decision log, observations, gaps, root cause hints, and action items are being built automatically in the background. The scribe role still matters, but the platform is doing the heavy lifting on structure.

Phase 3: Document, debrief, and improve

Make the hotwash a draft AAR, not a group therapy session

A hotwash can either accelerate the AAR or derail it. The difference is facilitation discipline.

Instead of asking "how did it go," use objective-anchored prompts:

"For Objective 1, what specifically worked as designed?"

"Where did you lose time, and why?"

"What decision authority was unclear or contested?"

"What information did you wish you had, and who owns it?"

Convert discussion into draft corrective actions on the spot. You are not finalizing wording in the room — you are capturing:

Corrective action (what will change)

Owner (person or role)

Due date concept (near, mid, long)

Validation method (how you will test the fix)

This turns the AAR from a document into a management tool.

Write a faster, better AAR using an evidence-first structure

If you followed the practices above, AAR writing becomes mostly assembly.

A practical structure that aligns with HSEEP-style expectations:

Executive summary that is honest and specific. Decision-makers read this. Avoid generic statements. Include the top strengths worth sustaining, the highest-risk gaps, and any cross-cutting systemic issues (approval latency, vendor dependencies, unclear roles).

Analysis organized by objective, with evidence. For each objective: what was expected, what happened (evidence-based), strengths, areas for improvement, root cause themes.

The key is to write findings as evidence statements:

"The organization did not have a documented threshold for escalating to the JIC, resulting in a 25-minute debate and inconsistent messaging drafts across units."

That is a finding you can act on.

Improvement plan that is implementable. AARs fail when corrective actions are unowned or untestable. At minimum, every action needs: corrective action, responsible party, timeline, and how completion will be validated.

Capability ratings that drive accountable improvement planning

HSEEP's AAR/IP approach uses a four-level performance rating system for each capability tested:

P (Performed without Challenges): Objectives achieved without notable issues

S (Performed with Some Challenges): Objectives achieved with notable challenges

M (Performed with Major Challenges): Objectives barely achieved due to significant issues

U (Unable to be Performed): Objectives could not be achieved

These ratings only hold up when they are tied to specific evidence from the exercise. A capability rated "S" should come with a clear explanation of what the challenge was — otherwise leadership has no basis for prioritizing the improvement investment.

Avoid the most common slow-AAR traps

Trap | What it looks like | Better practice |

|---|---|---|

The AAR tries to cover everything | Every conversation becomes a finding | Limit scope to objectives, park the rest |

Notes are unstructured | Pages of narrative with no tags | Use decision log + objective-tagged observations |

Findings lack root cause | "Need more training" repeated everywhere | Capture constraints and why decisions were hard |

Corrective actions are not testable | "Improve coordination" | Define a change plus how it will be validated |

No governance after publication | AAR is filed and forgotten | Assign owners and review progress on a cadence |

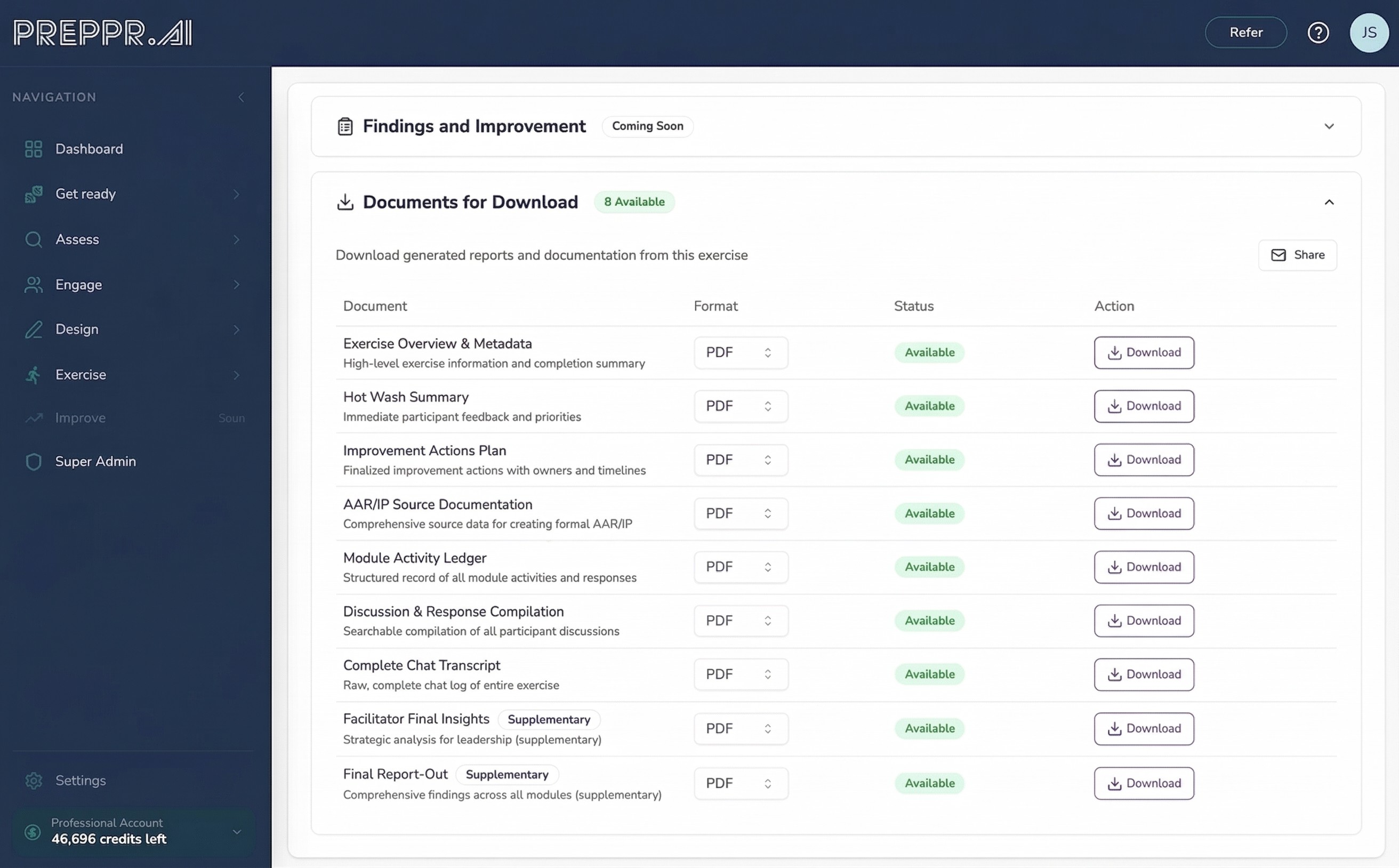

How Preppr generates the AAR/IP

By the time Preppr's exercise delivery workflow reaches the Improvement Planning phase, the system already has:

Approved response syntheses for every question in every module

Module report-outs with POETE analysis (Plans, Organization, Equipment, Training, Exercise needs)

Cross-module synthesis identifying systemic patterns

P/S/M/U capability ratings with evidence

Hotwash findings organized by strength and gap

Draft improvement actions with SMART framing

The Improvement Planning session uses this pre-generated material as a structured starting point. Draft actions are presented to the group for collaborative refinement — owners, timelines, success criteria, blockers, and validation methods are confirmed in the room. The resulting improvement plan isn't a wish list; it's a set of explicit commitments with names attached.

The documentation that comes out of this process reflects everything captured across the full exercise lifecycle. Because every section traces back to validated participant responses, facilitators aren't writing an interpretation of what happened — they're assembling documentation that participants already approved.

Turn AARs into a continuous learning cycle

AAR speed matters, but what matters more is whether the organization actually improves.

A lightweight governance loop that works across emergency management, healthcare, and private-sector resilience teams:

Publish the AAR quickly while memory is fresh (even if some sections are "draft pending validation").

Hold a corrective action review with leadership to approve owners and timelines.

Track corrective actions like operational work, not like training paperwork.

Re-test the highest-risk fixes in the next tabletop exercise, so improvements are validated, not assumed.

When you do this, tabletop exercises stop being annual events and start being part of operational readiness.

The compounding effect is significant. Each exercise cycle produces an approved improvement plan. The next exercise tests whether those improvements worked. Preppr's knowledge graph captures organizational intelligence from every exercise cycle, so institutional memory doesn't walk out the door when personnel turn over and every subsequent exercise starts from a richer baseline.

The simplest takeaway

If you want faster, better AARs, stop treating the AAR like a writing task. Treat it like the output of a well-instrumented exercise lifecycle.

Clear objectives, disciplined decision capture, artifact collection, and objective-tied hotwash prompts will do more for your AAR quality than any post-exercise writing sprint. And once your AARs consistently produce implementable improvement plans, your tabletop exercise program becomes a true readiness engine — not a compliance checkbox.

For organizations looking to compress the timeline across all three phases — design, delivery, and documentation — Preppr is built around this end-to-end lifecycle. The platform handles the structure so practitioners can focus on the judgment that only they can provide: the decisions, the tradeoffs, and the organizational context that no AI can substitute for.

See how Preppr delivers and documents tabletop exercises →